Pharmacy Practice

(52) Evaluating the Appropriate Use of Pharmacy Calculations by a Generative Pre-trained Transformer

Bryan J. Donald, PharmD

Associate Professor

The University of Louisiana at Monroe

Monroe, Louisiana, United States

Victoria Miller, PharmD (she/her/hers)

Associate Professor

The University of Louisiana at Monroe, United States

Primary Author(s)

Co-Author(s)

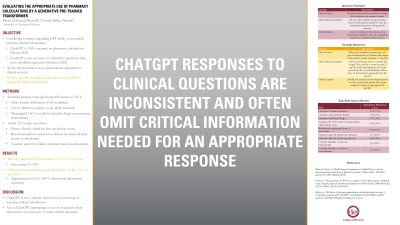

Objective : To determine the accuracy of the Generative Pre-trained Transformer model ChatGPT when presented with clinical calculations in a word-based format.

Methods: A list of commonly used clinical calculations in pharmacy was developed by study investigators. Sample questions were generated for each calculation using the following strategies: Direct Context – contains all information needed to complete the calculation in a sentence formation; Clinical Recommendation – asks for a direct clinical recommendation based on information needed to solve the calculation and answer a specific drug-based question; and Clinical Caution – asks to identify any concerns based on the information needed to solve the calculation and a specific clinical concern. The 115 sample questions were submitted to OpenAI’s ChatGPT bot in independent sessions. GPT clinical calculation accuracy was determined by comparing GPT answers to those derived by study investigators.

Results: When asked directly, ChatGPT correctly answered most basic calculation questions like corrected sodium or calcium, but incorrectly calculated creatinine clearance for all 12 presented. Clinical recommendations and cautions were correct for 34/48 (71%) of renal dosing questions overall but were only correct for 8/16 (50%) when a renal correction was required. Most responses avoided mentioning the critical calculation necessary for a correct answer. One serious factual hallucination was observed.

Conclusions: ChatGPT is not a reliable method for performing or learning clinical calculations, nor is it appropriate to use in situations where calculations are necessary to make appropriate clinical decisions. ChatGPT responses to clinical questions are inconsistent and often omit critical information necessary for an appropriate response.

Methods: A list of commonly used clinical calculations in pharmacy was developed by study investigators. Sample questions were generated for each calculation using the following strategies: Direct Context – contains all information needed to complete the calculation in a sentence formation; Clinical Recommendation – asks for a direct clinical recommendation based on information needed to solve the calculation and answer a specific drug-based question; and Clinical Caution – asks to identify any concerns based on the information needed to solve the calculation and a specific clinical concern. The 115 sample questions were submitted to OpenAI’s ChatGPT bot in independent sessions. GPT clinical calculation accuracy was determined by comparing GPT answers to those derived by study investigators.

Results: When asked directly, ChatGPT correctly answered most basic calculation questions like corrected sodium or calcium, but incorrectly calculated creatinine clearance for all 12 presented. Clinical recommendations and cautions were correct for 34/48 (71%) of renal dosing questions overall but were only correct for 8/16 (50%) when a renal correction was required. Most responses avoided mentioning the critical calculation necessary for a correct answer. One serious factual hallucination was observed.

Conclusions: ChatGPT is not a reliable method for performing or learning clinical calculations, nor is it appropriate to use in situations where calculations are necessary to make appropriate clinical decisions. ChatGPT responses to clinical questions are inconsistent and often omit critical information necessary for an appropriate response.

.png)